Newsroom

Actualités

19.09.2024 - EDHEC Grande Ecole

Bienvenue à la première cohorte d’étudiants de la filière Data Science & AI for Business

Le 2 septembre dernier, la première cohorte d’étudiants de la filière Data Science & AI for Business a fait sa rentrée sur le campus de Nice, avec Victor Planas-Bielsa, directeur de cette nouvelle filière. Cette journée a également été marquée…

17.09.2024 - EDHEC

Découvrez la 4e newsletter d'EDHEC-Risk Climate

"Delivering Research Insights on Double Materiality to the Financial Community".

Cette quatrième newsletter proposée par l'EDHEC-Risk Climate Impact Institute est entièrement (et uniquement) en anglais.

16.09.2024 - Giving

Campagne de rentrée solidaire : soutenez nos étudiants !

Avec la rentrée, la vie reprend sur les campus EDHEC, marquée par l’arrivée de nouveaux étudiants. Nous lançons une campagne de solidarité pour leur offrir les meilleures opportunités de s’épanouir. Chaque geste compte pour faire la différence.…

08.09.2024 - EDHEC

FT Masters in Management 2024 : le Programme Grande Ecole de l’EDHEC se classe 2e en France et 4e dans le monde

L’EDHEC Business School fait une percée historique à la 4e place mondiale du classement annuel des Masters in Management publié par le Financial…

05.09.2024 - EDHEC

Deux étudiants de l’EDHEC récompensés lors de l’évènement sportif de l’été

L'EDHEC Business School est fière d'annoncer la victoire de deux de ses étudiants, lors de la grande compétition sportive de l'été 2024 : Manon…

04.09.2024 - EDHEC

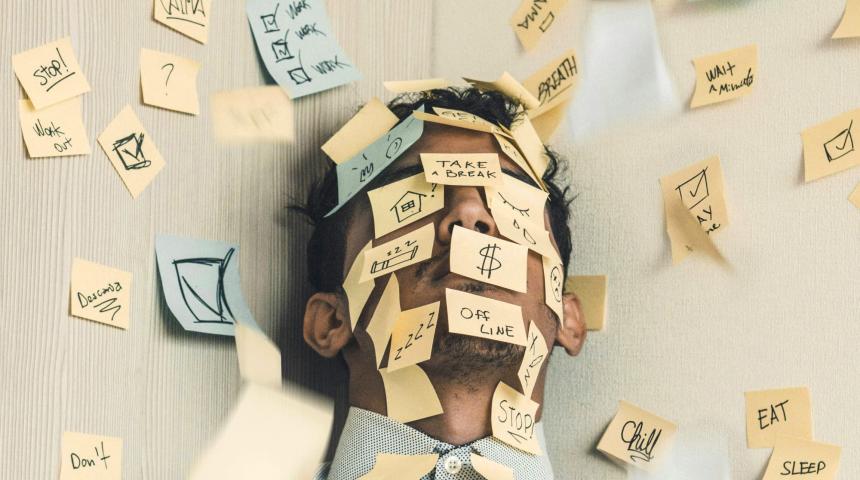

(Appel à participation) Entrepreneurs : comprendre le stress, la santé mentale et le bien-être pour mieux les gérer

L'image d'entrepreneurs superstars presque glamour, alliant pugnacité et succès, est malheureusement tenace. En réalité, des niveaux élevés de stress…

- DU 17.09.2024 - 19.09.2024 - Evènements Carrières, Online | OnlineJob Dating Online ❘ Apprentissage, stage et 1er emploi

Vous êtes à la recherche de vos futurs talents (apprentis, stagiaires ou jeunes collaborateurs) et vos opportunités sont à pourvoir pour janvier 2025 ?

Participez à notre 1er évènement de recrutement de l’année ! - DU 21.09.2024 - Studyrama | Salons | PrésentielSalon Studyrama - Paris

Retrouvez l'équipe des admissions du programme post-bac de l'EDHEC International BBA le 21 septembre 2024 au salon Studyrama à Paris.